Processing big data is one of the most complex steps organizations face. The process becomes even more complex when you have large amounts of real-time data.

In this post, we will review what big data processing is, how it is done, and learn more about two of the most popular data processing tools: Apache Kafka and Spark.

What is data processing? How is it done?

Data processing is defined as any operation or set of operations, whether or not performed using automated processes. It can be thought of as the collection, ordering, and organization of information based on logical and appropriate interpretation.

When a user accesses a database to retrieve search results, it is data processing that gets the desired results. The information extracted as search results is the result of data processing. This is why information technology focuses its existence on data processing.

Traditional data processing was performed using simple software. However, with the advent of big data, things have changed. Big data refers to information that exceeds 100 terabytes or petabytes.

Additionally, this information is updated regularly. Examples include data from contact centers, social media, stock exchange trading data, etc. Such data is also called a data stream (a constant, uncontrolled stream of data). Its main characteristic is that there are no defined limits on the data, so it is not possible to determine when the stream starts or ends.

Data is processed once it reaches its destination. Some authors refer to this as real-time processing or online processing. Another approach is block, batch, or offline processing, where blocks of data are processed in time frames of hours or days. Batch is often a process that runs nightly and consolidates the day’s data. Older reports may be generated for a weekly or monthly time frame.

Given that the best big data processing platforms over streaming are open source such as Kafka and Spark, these platforms can use other different complementary platforms. This means it evolves faster and uses more tools because it’s open source. In this way, data streams are received uninterrupted from other locations at variable speeds.

Let’s take a look at two of the most widely known data processing tools and compare them.

apache kafka

Apache Kafka is a messaging system that creates streaming applications with continuous data flow. Kafka, originally created by LinkedIn, is log-based. Logs are the basic storage format because new information is appended to the end of the file.

Kafka is one of the best solutions for big data, as its main feature is its high throughput. Apache Kafka also allows you to transform batch processing into real-time.

Apache Kafka is a publish/subscribe messaging system where applications publish messages and subscribing applications receive messages. Kafka solutions have low latency because the time between publishing and receiving a message can be milliseconds.

Kafka’s work

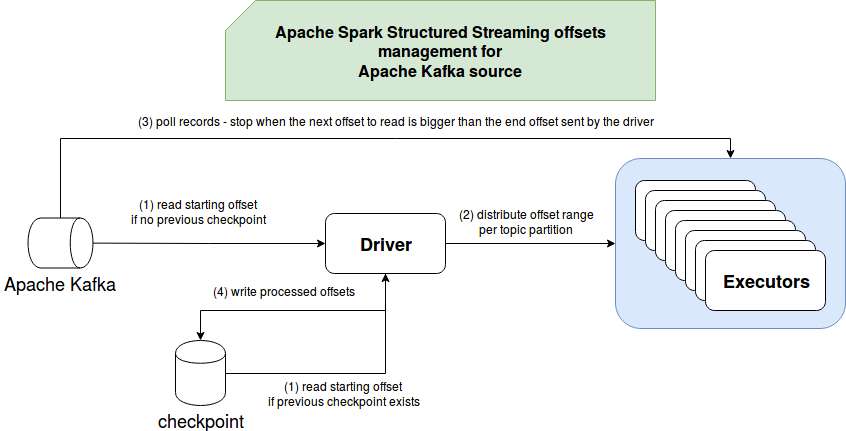

Apache Kafka’s architecture consists of producers, consumers, and the cluster itself. A producer is any application that publishes messages to a cluster. A consumer is any application that receives messages from Kafka. A Kafka cluster is a set of nodes that act as a single instance of a messaging service.

A Kafka cluster consists of multiple brokers. A broker is a Kafka server that receives messages from producers and writes them to disk. Each broker maintains a list of topics, and each topic is divided into several partitions.

After receiving the message, the broker sends the message to the registered consumers for each topic.

Apache Kafka configuration is managed by Apache Zookeeper , which stores cluster metadata such as partition locations, list of names, list of topics, and available nodes. Zookeeper thus maintains synchronization between the various elements of the cluster.

Zookeeper is important because Kafka is a distributed system. That is, writes and reads are performed by multiple clients simultaneously. In the event of a failure, Zookeeper elects a replacement and restores operations.

Usage example

Kafka became particularly popular for use as a messaging tool, but its versatility goes beyond that and can be used in a variety of scenarios, as in the examples below.

messaging

An asynchronous form of communication that separates communicating parties. In this model, one side sends data as messages to Kafka, which is later consumed by another application.

activity tracking

Allows us to store and process data that tracks your interactions with a website, such as page views, clicks, and data entry. This type of activity typically generates large amounts of data.

metrics

Aggregate data and statistics from multiple sources to generate centralized reports.

Log aggregation

Centrally aggregate and store log files originating from other systems.

stream processing

Data pipeline processing consists of multiple stages in which raw data is consumed from topics and aggregated, enriched, or transformed into other topics.

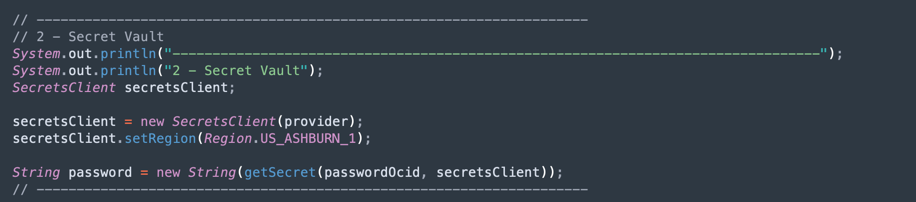

To support these features, the platform provides three basic APIs:

- Stream API: Acts as a stream processor that consumes data from one topic, transforms it, and writes it to another topic.

- Connector API: Allows you to connect topics to existing systems such as relational databases.

- Producer and Consumer APIs: Enable your applications to publish and consume Kafka data.

Strong Points

Replication, partitioning, and ordering

Kafka messages are replicated across cluster node partitions in the order they arrive to ensure security and speed of delivery.

data conversion

Apache Kafka also allows you to transform batch processing into real-time using the Batch ETL Streams API.

sequential disk access

Apache Kafka is considered fast, so it keeps messages on disk rather than in memory. In fact, memory accesses are fast in most situations, especially when you consider accessing data in random locations in memory. However, Kafka performs sequential access, which makes the disk more efficient in this case.

apache spark

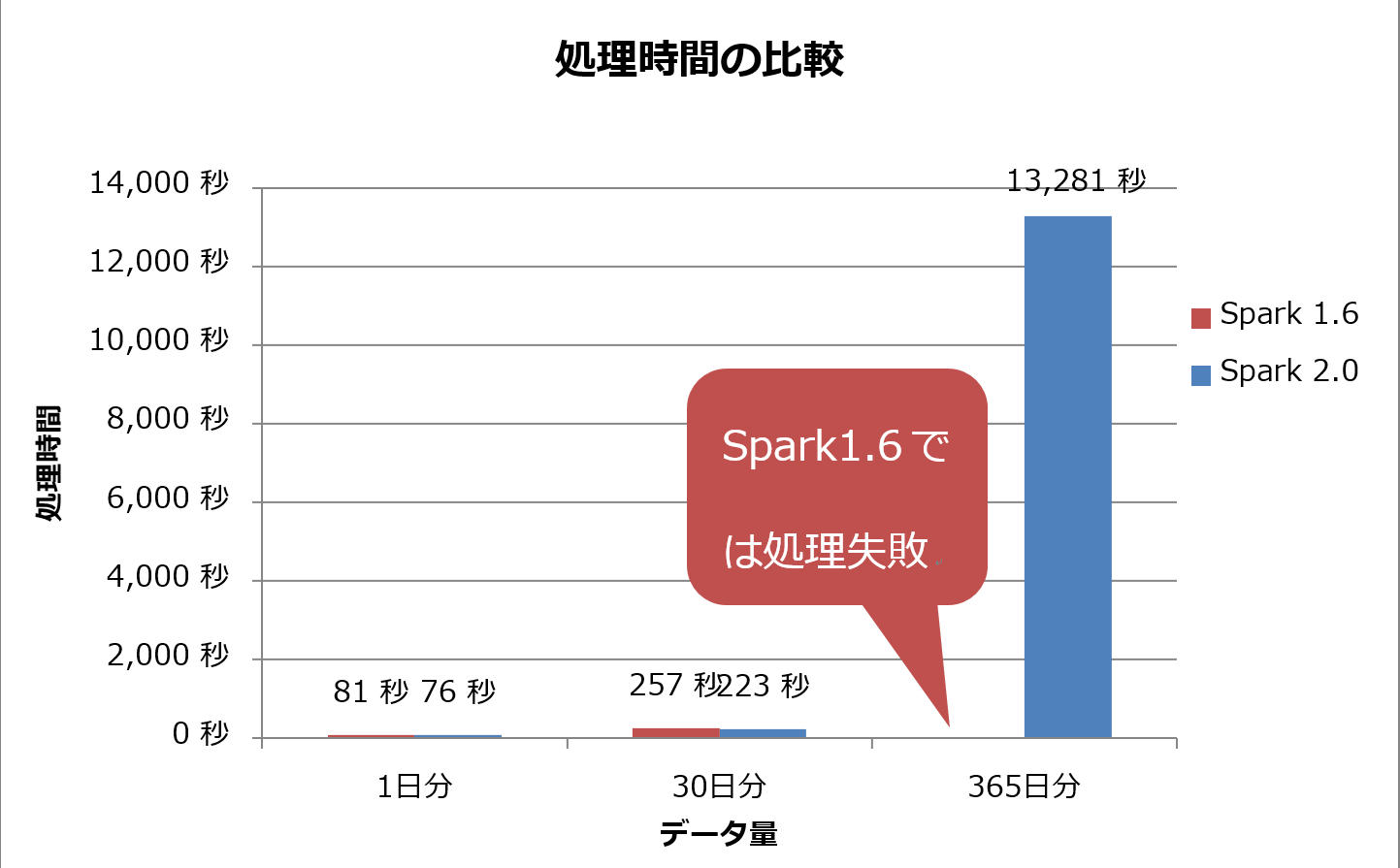

Apache Spark is a set of big data computing engines and libraries for processing parallel data across clusters. Spark is an evolution of Hadoop and the Map-Reduce programming paradigm. Efficient use of memory, where data is not held on disk during processing, can be 100 times faster.

Spark consists of three levels.

- Low-level API: This level contains the basic functionality to do the job and any other functionality required by other components. Other important functions of this layer include security, networking, scheduling, and managing logical access to file systems such as HDFS, GlusterFS, and Amazon S3.

- Structured API: The structured API level handles data manipulation through DataSets or DataFrame, which can be read in formats such as Hive, Parquet, and JSON. SparkSQL, an API that lets you write queries in SQL, lets you manipulate your data the way you want.

- High level: At the highest level, there is a Spark ecosystem with various libraries such as Spark Streaming, Spark MLlib, Spark GraphX, etc. They are responsible for handling surrounding processes such as streaming ingestion and crash recovery, traditional machine learning model creation and validation, and graph and algorithm processing.

How spark works

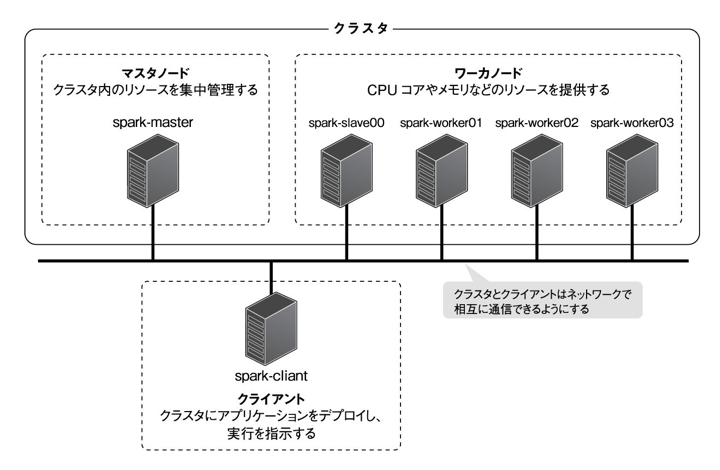

The architecture of a Spark application consists of three main parts:

Driver program : Responsible for coordinating the execution of data processing.

Cluster Manager : The component responsible for managing the various machines in the cluster. Required only if Spark runs distributed.

Worker node : A machine that executes a program’s tasks. When Spark is running locally on your machine, it acts as a driver program and works. This way of running Spark is called standalone.

Spark code can be written in a variety of languages. The Spark console, called Spark Shell, allows you to learn and explore data interactively.

So-called Spark applications consist of one or more jobs and enable support for large-scale data processing.

When we talk about execution, Spark has two modes.

- Client: The driver runs directly on the client, bypassing the resource manager.

- Cluster: A driver that runs on the application master through the resource manager (in cluster mode, the application continues to run even if the client disconnects).

Spark must be used correctly so that linked services such as Resource Manager can identify the needs of each execution and provide the best performance. Therefore, it is up to the developer to know the best way to run Spark jobs and structure the calls made, and to do so, structure and configure the executor Spark as desired.

Spark jobs primarily use memory, so it’s common to adjust Spark configuration values for work node executors. Depending on your Spark workload, you may determine that certain non-standard Spark configurations provide more optimal execution. For this purpose, you can perform comparative tests between the various available configuration options and the default Spark configuration itself.

Usage example

Apache Spark helps you process large amounts of data, whether real-time or archived, structured or unstructured. Below are some common examples of its use.

Data enrichment

Companies often use a combination of historical customer data and real-time behavioral data. Spark helps you build continuous ETL pipelines that transform unstructured event data into structured data.

Trigger event detection

Spark Streaming allows you to quickly detect and respond to rare or suspicious behavior that could indicate a potential problem or fraud.

Complex session data analysis

Spark Streaming allows you to group and analyze events related to a user’s session, such as activity after logging into your application. This information can also be used continuously to update machine learning models.

Strong Points

Iterative processing

When your tasks iteratively process data, Spark’s resilient distributed datasets (RDDs) enable multiple in-memory map operations without writing intermediate results to disk.

Graphic processing

Spark’s computational model using the GraphX API excels at the iterative computations typical of graphics processing.

machine learning

Spark has a built-in machine learning library, MLlib, with ready-made algorithms that also run in memory.

Kafka vs. Spark

Although people’s interest in both Kafka and Spark is roughly the same, there are some key differences between the two. Let’s take a look.

#1.Information processing

Kafka is a real-time data streaming and storage tool that is responsible for transferring data between applications, but it is not sufficient to build a complete solution. Therefore, other tools such as Spark are required for tasks that Kafka does not perform. Spark, on the other hand, is a batch-first data processing platform that takes data from Kafka topics and transforms them into joined schemas.

#2.Memory management

Spark uses robust distributed datasets (RDDs) for memory management. Rather than trying to process huge datasets, distribute them across multiple nodes in your cluster. In contrast, Kafka uses sequential access similar to HDFS and stores data in buffer memory.

#3. ETL conversion

Both Spark and Kafka support ETL transformation processes that copy records from one database to another, typically transaction-based (OLTP) to analytical-based (OLAP). However, unlike Spark, which has built-in functionality for ETL processes, Kafka relies on the Streams API to support it.

#4.Data persistence

RRD allows Spark to store data in multiple locations for later use. Kafka, on the other hand, requires you to define a dataset object in your configuration to persist data.

#5.Difficulty

Spark is a complete solution and supports a variety of high-level programming languages, making it easy to learn. Kafka relies on various APIs and third-party modules, which can make it difficult to work with.

#6.Recovery

Both Spark and Kafka offer recovery options. Spark uses RRD, so data can be stored continuously and can be restored in the event of a cluster failure.

Kafka continuously replicates data within a cluster and between brokers, so you can migrate to another broker in the event of a failure.

Similarities between Spark and Kafka

| apache spark | apache kafka |

| open source | open source |

| Building data streaming applications | Building data streaming applications |

| Supports stateful processing | Supports stateful processing |

| Supports SQL | Supports SQL |

last word

Kafka and Spark are both open source tools written in Scala and Java that allow you to build real-time data streaming applications. They have several things in common, including support for stateful processing, SQL, and ETL. Kafka and Spark can also be used as complementary tools to solve the problem of data transfer complexity between applications.

![How to set up a Raspberry Pi web server in 2021 [Guide]](https://i0.wp.com/pcmanabu.com/wp-content/uploads/2019/10/web-server-02-309x198.png?w=1200&resize=1200,0&ssl=1)